Turbocharged data analysis could prevent gravitational wave computing crunch

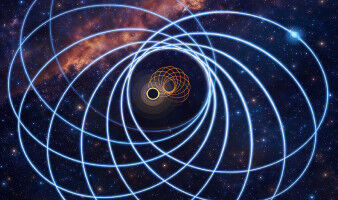

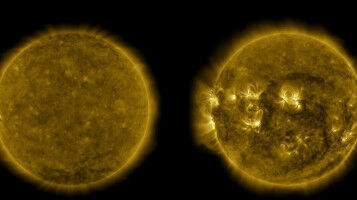

A new method of analysing the complex data from massive astronomical events could help gravitational wave astronomers avoid a looming computational crunch. Researchers from the University of Glasgow have used machine learning to develop a new system for processing the data collected from detectors like the Laser Interferometer Gravitational-Wave Observatory (LIGO). The system, which they call VItamin, is capable of fully analysing the data from a single signal collected by gravitational wave detectors in less than a second, a significant improvement on current analysis techniques. Since the historic first detection of the ripples in spacetime caused by colliding black holes in 2015, gravitational wave astronomers have relied on an array of powerful computers to analyse detected signals using a process known as Bayesian inference. A full analysis of each signal, which provides valuable information about the mass, spin, polarisation and inclination of orbit of the bodies involved in each event, can currently take days to be completed. Since that first detection, gravitational wave detectors like LIGO in the USA and Virgo in Italy have been upgraded to become more sensitive to weaker signals, and other detectors like KAGRA in Japan have come online. As a result, gravitational wave signals are being detected with increasing regularity, putting the current computing infrastructure under greater strain to analyse each detection.